I am a Research Scientist at Google DeepMind, where I work on safe coding agents and formal-verification-based safety mechanisms for large language model agents. Previously, I was an Applied Scientist at Amazon AWS AI Lab, where I shipped a production-grade agent verification module that is now integrated into a flagship AWS product used by enterprise customers.

I completed my Ph.D. in Computer Science at the University of Pennsylvania in 2025, advised by Insup Lee and Osbert Bastani. My research focuses on agentic AI safety, alignment, uncertainty quantification, and LLM evaluation — with the long-term goal of building reliable, secure, and verifiable LLM agent systems. My work has been published at NeurIPS, ICLR, ICML, NAACL, EMNLP, and KDD, including a Spotlight at NeurIPS 2024 and a Best Paper at the 2023 TEACH workshop at ICML.

I'm always open to collaboration on agentic safety and trustworthy AI — feel free to reach out.

Research Scientist Google DeepMind

Applied Scientist Amazon AWS AI Lab

04.1 Featured

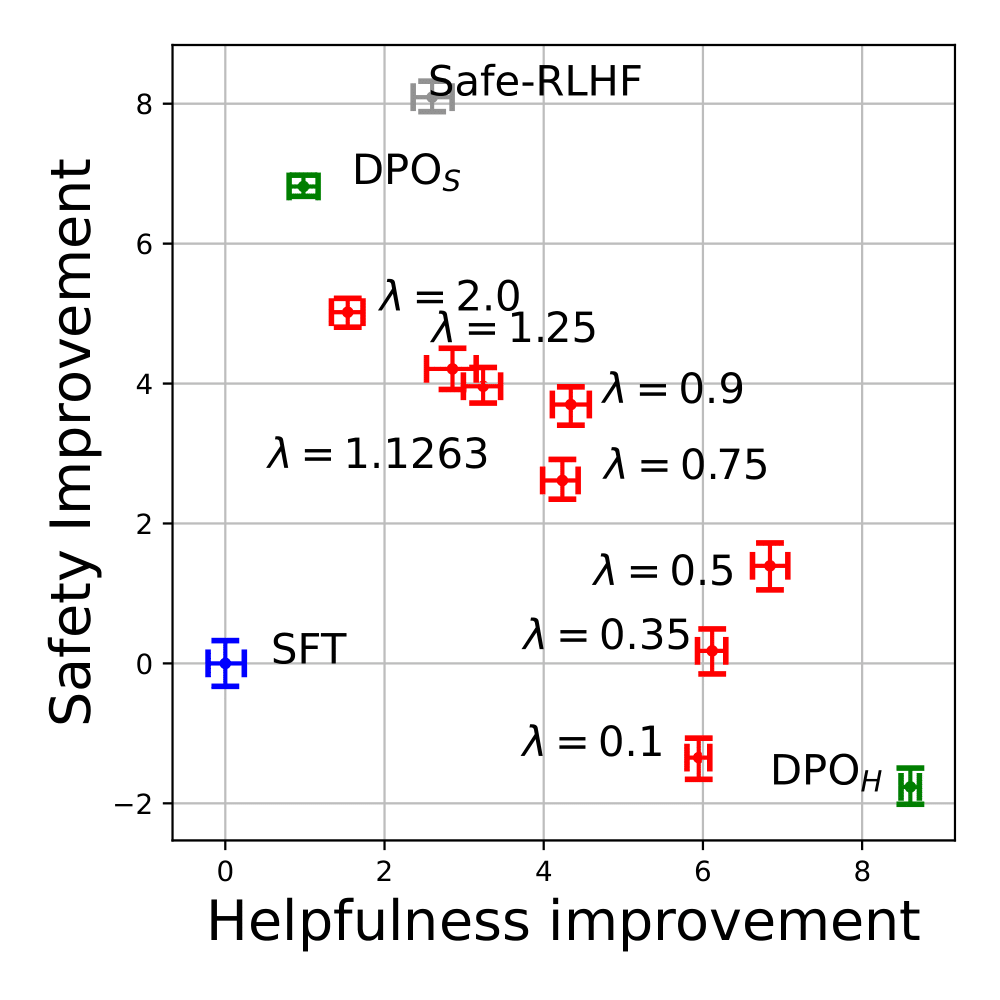

TL;DRA one-shot safety alignment algorithm that navigates the helpfulness/safety trade-off via convex dualization, reducing computational complexity by ~90%.

AbstractThe growing safety concerns surrounding LLMs raise an urgent need to align them with diverse human preferences. We present a dualization perspective that reduces constrained alignment to an equivalent unconstrained problem by pre-optimizing a smooth and convex dual function with a closed form. This eliminates cumbersome primal-dual policy iterations, greatly reducing computational burden and improving training stability. Our strategy yields two practical algorithms (MoCAN and PeCAN) in model-based and preference-based settings.

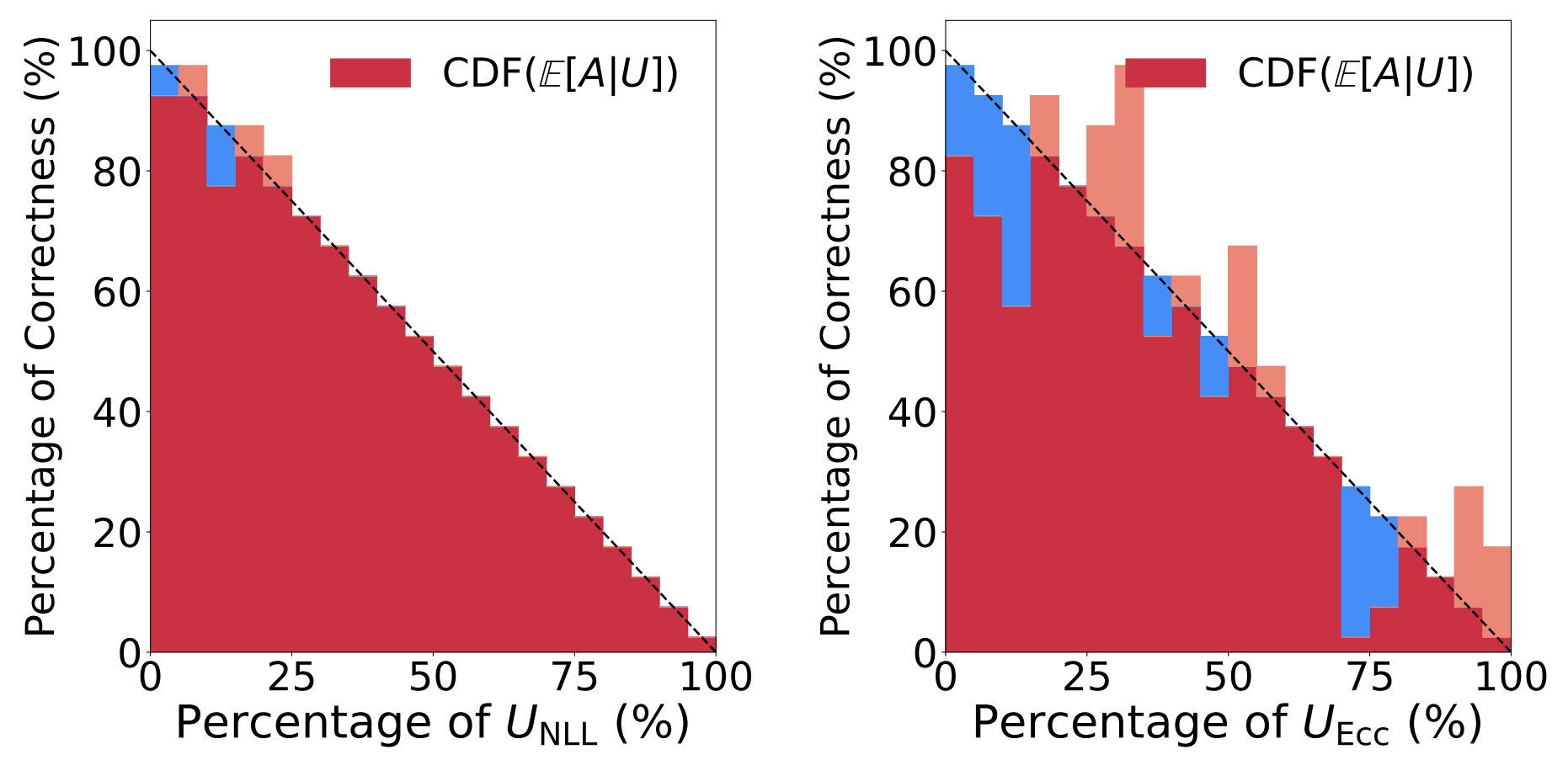

TL;DRA rank-based framework for assessing LLM uncertainty measures, robust to the differing value ranges of existing metrics and free of ad-hoc thresholds.

AbstractMany uncertainty measures (semantic entropy, affinity-graph-based measures, etc.) have been proposed for language models, but they take values over different ranges, making them hard to compare. We develop Rank-Calibration, a principled framework whose key tenet is that higher uncertainty should imply lower generation quality on average. Rank-calibration quantifies deviations from this ideal without requiring ad-hoc thresholding of correctness scores. We demonstrate broad applicability and interpretability empirically.

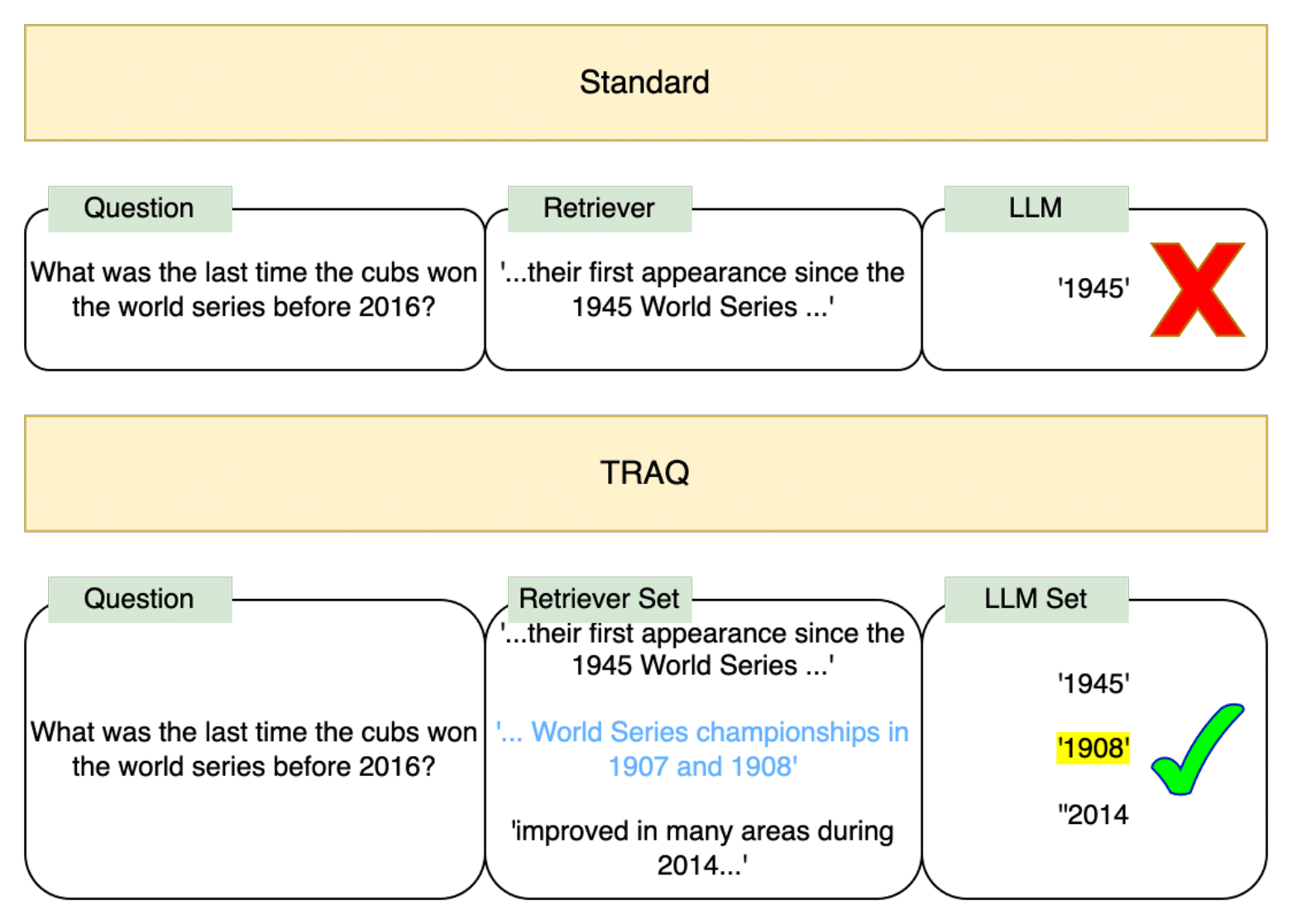

TL;DRThe first end-to-end statistical correctness guarantee for retrieval-augmented generation, combining conformal prediction with Bayesian optimization.

AbstractWhen applied to open-domain question answering, LLMs frequently generate incorrect responses based on hallucinated facts. RAG mitigates hallucinations but provides no correctness guarantees. We propose TRAQ, which uses conformal prediction to construct prediction sets guaranteed to contain the semantically correct response with high probability, and Bayesian optimization to minimize set size. Empirically, TRAQ provides the desired correctness guarantee while reducing prediction set size by 16.2% on average compared to ablations. Also: Best Paper, 2023 TEACH Workshop @ ICML.

04.2 — 2026 / Under Review

04.3 — 2025

04.4 — 2024

04.5 — 2023

04.6 — Earlier